Building a Resilient AI Architecture Why Model Flexibility is the Defining CIO Decision for 2026

As the enterprise landscape shifts from experimental generative AI pilots to full-scale production environments, the role of the Chief Information Officer (CIO) is undergoing a fundamental transformation, moving from a manager of systems to a strategist of intelligence. According to a comprehensive new industry report titled "7 career-making AI decisions for CIOs in 2026," the ability of an organization to scale artificial intelligence successfully will hinge on a series of critical architectural choices made today. This report, which provides a roadmap for navigating the volatile AI sector over the next twenty-four months, has identified "Decision #5: Model Flexibility" as the primary determinant of whether a company’s AI strategy will flourish or become an anchor of technical debt. The core premise is that AI will either be built on an architecture designed to evolve as Large Language Model (LLM) capabilities shift, or it will be locked into assumptions that were only accurate for a brief window in 2024.

This fifth installment in a seven-part series follows a rigorous examination of the preceding four pillars of modern AI leadership. To understand the gravity of model flexibility, one must view it within the context of the broader CIO referendum currently taking place across the Fortune 500. The journey began with Decision #1, which established AI as a leadership referendum—a test of a CIO’s ability to align technology with business value. This was followed by Decision #2, focusing on explainability as the gatekeeper for production-ready AI, and Decision #3, which addressed the accountability gap inherent in AI agents embedded in critical workflows. Most recently, Decision #4 highlighted the "cost of vendor regret," emphasizing that the AI stack itself has become a career-defining calculation. Now, as organizations look toward 2026, the focus shifts to the model layer, where the risk of architectural rigidity poses a significant threat to long-term viability.

The Evolution of the AI Architecture: A Historical Context

To appreciate why model flexibility has become a "career-making" decision, it is necessary to look at the rapid chronology of the generative AI era. In late 2022, the release of ChatGPT by OpenAI sparked a gold rush, leading many enterprises to hard-code their initial applications directly into the GPT-3.5 and GPT-4 APIs. By mid-2023, the market saw an explosion of competition, with Anthropic’s Claude, Google’s Gemini, and Meta’s Llama series providing viable alternatives.

By 2024, the "state of the art" was changing every three to six months. A model that was the undisputed leader in reasoning in January was often eclipsed by a cheaper, faster, or more specialized model by June. CIOs who built their entire infrastructure around a single model found themselves trapped. They were unable to take advantage of the 50% to 70% cost reductions offered by newer iterations or the enhanced context windows of competing architectures without a complete rewrite of their application logic. This historical pattern suggests that by 2026, the gap between "fixed" and "flexible" architectures will represent the difference between an agile, cost-effective enterprise and one burdened by obsolete legacy AI.

The Technical Debt of 2024 Assumptions

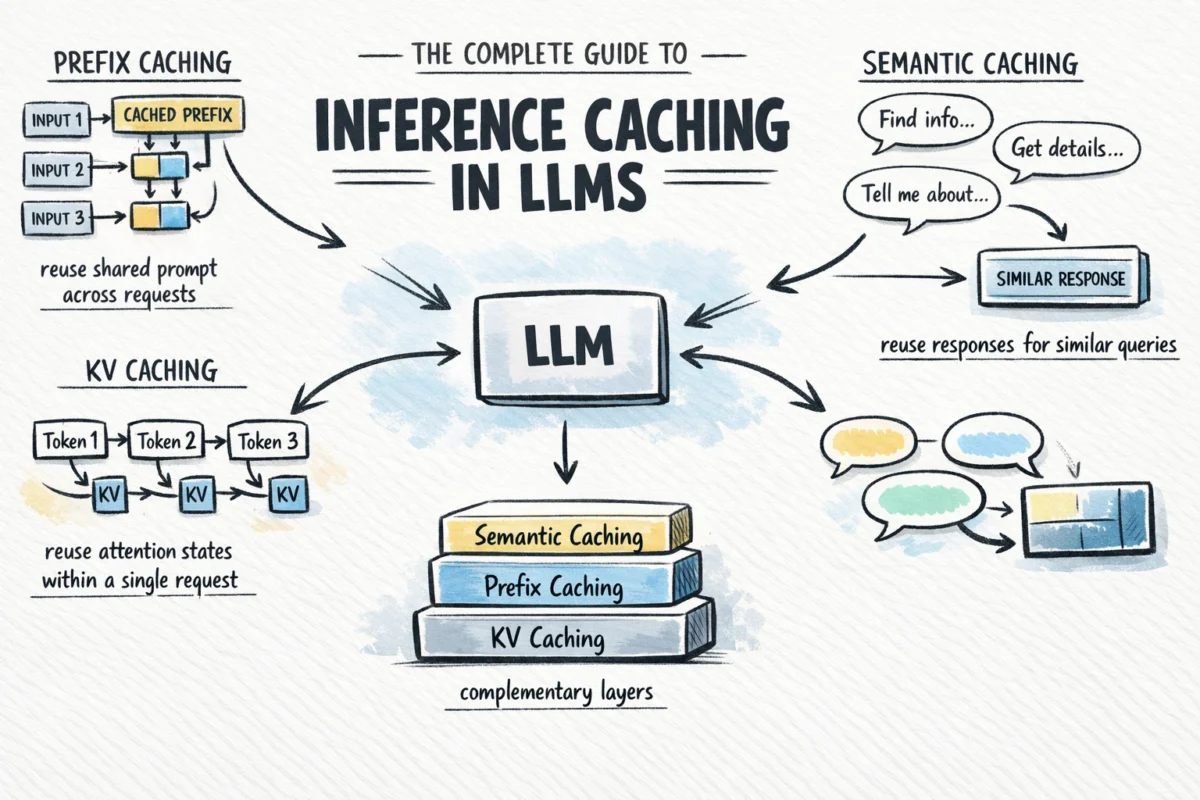

The "2024 assumptions" referred to in the report include the belief that a single, massive model is the best solution for every task. In reality, the industry is moving toward a "mixture of experts" (MoE) and small language model (SLM) approach. If a CIO’s architecture assumes a monolithic model structure, the organization cannot easily pivot to using smaller, specialized models for simpler tasks—a move that typically results in significant latency and cost improvements.

Data from recent market analyses indicates that the cost of running LLMs has decreased by nearly 90% since early 2023 for comparable performance levels. However, realizing these savings requires the ability to swap models seamlessly. Organizations that lack this "plug-and-play" capability at the model layer are effectively paying a "rigidity tax." This tax manifests as higher operational costs, slower response times for end-users, and an inability to adopt new features—such as multimodal capabilities or enhanced reasoning—as soon as they hit the market.

Supporting Data: The Rising Cost of Rigidity

Industry research from Gartner and IDC supports the urgency of this decision. Recent surveys of global IT leaders suggest that:

- Over 60% of enterprises currently use three or more different LLMs across various business units.

- Approximately 45% of CIOs cite "vendor lock-in" as their primary concern when scaling generative AI.

- The average performance-to-price ratio of open-source models (like Llama 3) is now competitive with proprietary models for 80% of common enterprise tasks, such as summarization and data extraction.

These statistics highlight a clear trend: the value is shifting from the models themselves to the orchestration layer. A flexible architecture allows a company to route a query to the most efficient model available at that specific moment. For example, a simple customer service inquiry might be routed to a fast, inexpensive SLM, while a complex legal analysis is sent to a high-reasoning flagship model. Without model-agnostic design, this level of optimization is impossible.

Stakeholder Perspectives and Market Reactions

The shift toward model flexibility is receiving mixed reactions from different sectors of the technology ecosystem. Major cloud providers (hyperscalers) have traditionally encouraged "walled garden" ecosystems, where using their specific model and compute stack provides the most seamless experience. However, in response to enterprise demand for flexibility, even these giants are beginning to offer "model gardens" and "model-as-a-service" platforms that allow for easier switching.

From the perspective of Chief Financial Officers (CFOs), model flexibility is a risk-mitigation strategy. "We cannot afford to tie our five-year digital transformation budget to a single startup or a single model version," noted one financial analyst covering the tech sector. "The CIOs who are winning the confidence of the board are those who can prove that their AI investments are ‘future-proofed’ against the inevitable obsolescence of today’s leading models."

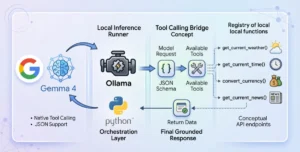

Meanwhile, developers and AI engineers are advocating for "abstraction layers." Much like how the software industry moved from physical servers to virtual machines and then to containers (Docker/Kubernetes) to ensure portability, AI development is moving toward "LLM Gateways." These gateways provide a unified API through which developers can call any model, making the underlying model a commodity rather than a constraint.

Broader Impact and Strategic Implications for 2026

As we look toward 2026, the implications of Decision #5 extend beyond mere technical efficiency. They touch upon regulatory compliance, data sovereignty, and competitive advantage.

- Regulatory Compliance and the EU AI Act: As global regulations like the EU AI Act come into full effect, organizations may be forced to abandon certain models if they are found to be non-compliant with transparency or bias requirements. A flexible architecture allows for a "emergency swap" of the model layer without disrupting the entire business process.

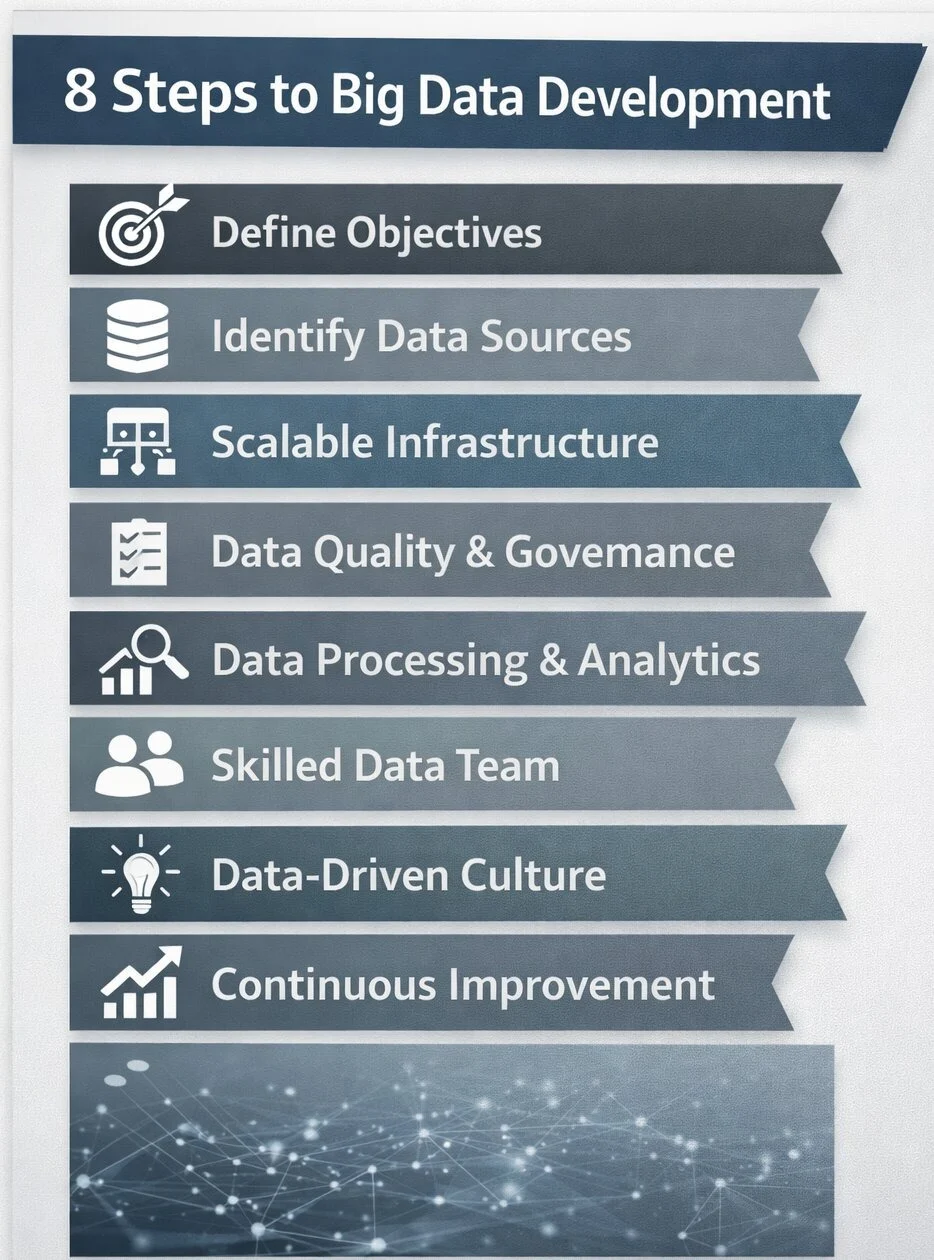

- Data Sovereignty: Many enterprises are discovering that while they started with public cloud models, they eventually need to move certain workloads to "on-premises" or "private cloud" open-source models to meet data privacy standards. An architecture built for flexibility makes this transition a configuration change rather than a re-engineering nightmare.

- The Rise of "Model Routing": The report suggests that by 2026, the most successful companies will not just be "using AI" but "orchestrating AI." This involves sophisticated "model routers" that automatically choose the best model based on cost, speed, and accuracy requirements for every individual request.

Conclusion: Designing for an Uncertain Future

The fifth career-making decision for CIOs is a call to action to move away from the "adhoc" AI implementation strategies of the past two years. The report, "7 career-making AI decisions for CIOs in 2026," makes it clear that the model layer must be treated as a dynamic utility rather than a static foundation.

To succeed, CIOs must prioritize architectures that decouple the application logic from the underlying model. This means investing in robust API management, adopting model-agnostic development frameworks, and maintaining a diverse portfolio of model providers. The goal is to build a system where the "intelligence" can be upgraded as easily as a software patch.

In the final analysis, the difference between an AI strategy that scales and one that stalls will come down to foresight. The CIOs who design for flexibility today are the ones who will be able to harness the breakthroughs of 2026 without being held back by the limitations of 2024. As the report concludes, the model is no longer the destination; it is a moving target. The architecture, however, is the legacy. By ensuring that AI is built to evolve, leaders can transform a period of intense technological volatility into a sustained competitive advantage.