Why Enterprise AI Fails Without a Context Engine: The Critical Role of Governed Memory in Scaling Generative AI

The global enterprise landscape has reached a paradoxical crossroads in its adoption of artificial intelligence. While collective investment in generative AI (GenAI) is estimated to have surpassed $40 billion, the vast majority of these initiatives are failing to transition from experimental pilots to value-generating production tools. According to recent data from MIT’s NANDA initiative, a staggering 95% of enterprise GenAI pilots fail to deliver measurable business value. This systemic failure is not a result of poor model quality or a lack of computing power, but rather a fundamental disconnect between generalized AI models and the highly specific, complex, and "brownfield" environments of modern corporations.

To address this gap, industry leaders are increasingly pointing toward a critical missing component: the context engine. Without a dedicated layer of organizational knowledge, system awareness, and governed memory, AI agents operate in a vacuum, lacking the institutional intuition that human employees acquire through months of onboarding. The challenge of scaling AI is now being reframed not as a battle of model size, but as a challenge of infrastructure and the delivery of governed, context-rich environments.

The Crisis of Enterprise AI Adoption

The MIT NANDA initiative’s "State of AI in Business 2025" report highlights a sobering reality for C-suite executives. Despite the hype surrounding Large Language Models (LLMs), the inability of these systems to retain feedback, adapt to specific workflow contexts, or improve over time has rendered them ineffective for complex enterprise tasks. Most GenAI systems are "stateless" by design, meaning they approach every prompt as if it were their first interaction, oblivious to the millions of lines of legacy code, internal documentation, and unwritten cultural norms that define a company’s operations.

The core barrier identified in the report is the failure of approach. Most organizations treat GenAI as a plug-and-play solution rather than a system that requires a sophisticated data architecture to function within an existing ecosystem. This lack of integration leads to "hallucinations" or, more commonly, technically correct but contextually irrelevant outputs that require extensive human correction, thereby negating the promised productivity gains.

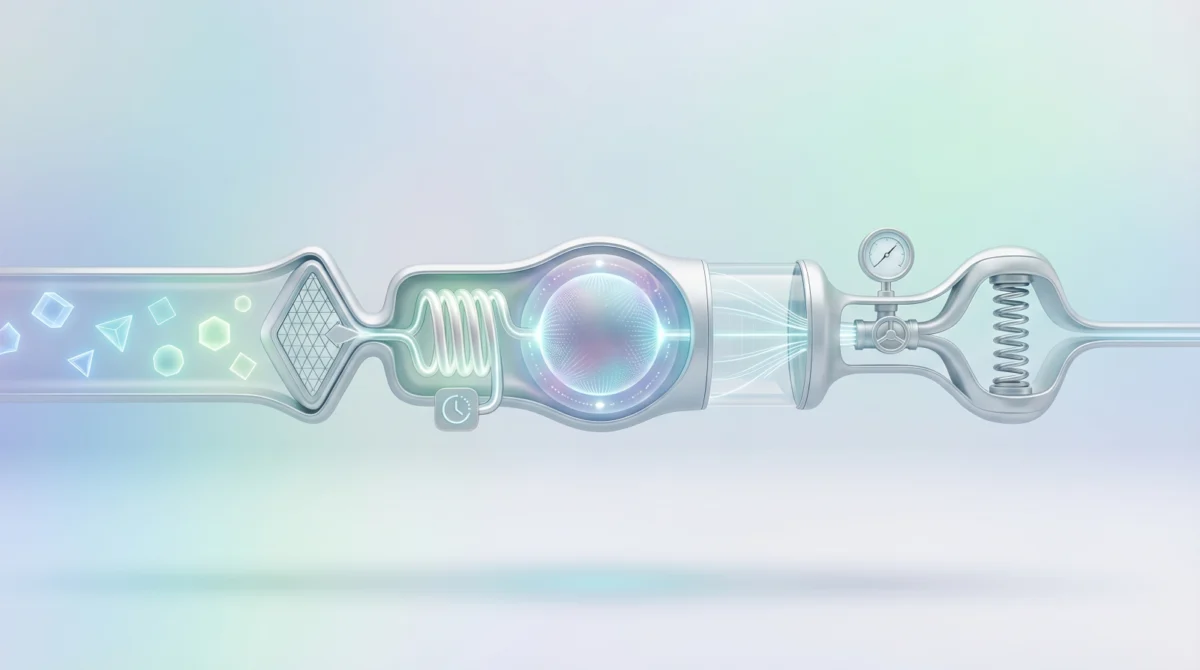

The Science of Governed Memory

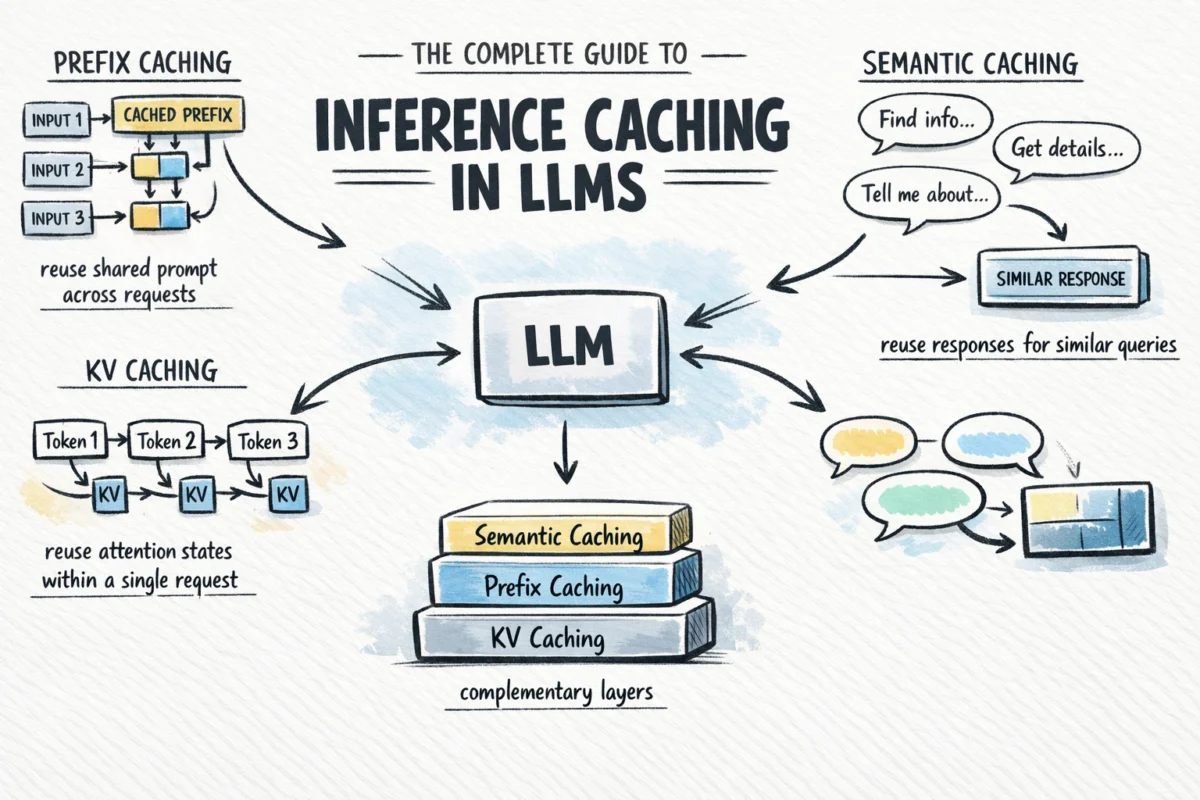

Recent academic research supports the necessity of a dedicated context layer. A study published on ResearchGate, titled "Governed Memory: A Production Architecture for Multi-Agent Workflows," provides empirical evidence of the performance gap. The study demonstrates that even the most advanced AI systems operate with a mediocre 53% to 65% accuracy on long-horizon, multi-step enterprise tasks when they lack a shared, governed organizational context.

However, when a dedicated context layer—or "governed memory"—is introduced, performance on the LoCoMo benchmark rises to 74.8%. This represents a material reduction in task failure for production workflows. Beyond mere accuracy, the study revealed that a context layer reduces token consumption by 50.3% across multi-step executions. In a production environment, this translates directly into a 50% reduction in operating costs. Furthermore, the architecture enforced zero cross-entity data leakage under adversarial testing, satisfying a primary requirement for highly regulated sectors such as banking and healthcare.

Insights from Eran Yahav: The Onboarding Gap

In a recent discussion on the AI in Business podcast, Eran Yahav, CTO and co-founder of Tabnine and a Professor of Computer Science at the Technion, explained why organizational context must be viewed as infrastructure. Yahav, whose background includes a tenure as a Research Staff Member at the IBM T.J. Watson Research Center, argues that AI agents are currently being "hired" into companies without any form of onboarding.

"AI agents are facing this critical challenge of not having the understanding that human engineers do," Yahav noted. "They need to understand the organization, the existing systems, how existing systems are being maintained and manipulated."

In large financial institutions, a human engineer typically requires six to nine months to become fully productive. This period is spent learning the dependencies, business logic, and legacy patterns encoded in millions of lines of code. AI agents, when deployed without a context engine, are expected to perform immediately without this knowledge. Consequently, they often select outdated components or misinterpret legacy patterns, behaving much like an untrained junior developer who has been given access to a massive, complex codebase without guidance.

The "Ferrari Without a Map" Analogy

Yahav utilizes a vivid analogy to describe the current state of AI agents: they are like Ferraris without maps. "The agent itself is like this very powerful car. It can go really, really fast. But if it doesn’t have a map of where it’s trying to go, it will just drive in circles very, very quickly and basically burn a lot of fuel and get nowhere."

In an enterprise setting, "burning fuel" equates to high token costs and wasted compute resources. Yahav points out that if an agent is asked to retrieve employee data in a large firm, it might find fourteen different ways to do so. Without a context engine to signal which API is the current standard and which thirteen are deprecated, the agent will likely pick the most accessible or the first one it encounters, which is often the wrong choice.

To prevent this, a context engine must continuously ingest source code, architectural artifacts, historical incident data, and production-level logs. It then pre-computes these dependencies so that when an agent is tasked with a project, it queries a governed, up-to-date map of the organization rather than trying to rediscover the wheel with every prompt.

Perimeter Deployment and Compliance

A significant hurdle for enterprise AI is the sensitivity of the data required to provide context. A context engine, by definition, must have access to a company’s most valuable intellectual property: its source code, design records, and production telemetry. For many organizations, particularly those in regulated industries, sending this data to a third-party cloud provider is a non-starter.

Yahav emphasizes that the context engine must sit within the enterprise boundary. "It has access to many of the most precious sources of information inside the organization. Many of our customers want the context engine to run inside their perimeter," he explained. This requirement for on-prem or private cloud deployment is driven by both security and trust. Organizations must be certain that the system governing their AI agents is not inadvertently leaking internal logic to external entities or training models on proprietary secrets.

Perimeter-based deployment also ensures that the "governed" part of governed memory is strictly controlled. It allows organizations to set specific guardrails on what the AI can see and do, ensuring that the agent’s behavior remains within the bounds of corporate policy and regulatory compliance.

Redefining ROI: From Pilots to Performance

For Chief Financial Officers (CFOs) and technology leaders, the shift from AI pilots to AI production requires a new set of metrics. Yahav suggests that the industry’s current methods for measuring ROI are immature. He recommends focusing on two primary metrics: token spend efficiency and team output velocity.

Without a context engine, token spend remains high because agents must process massive amounts of irrelevant information to find the correct path. By pre-computing organizational knowledge, context engines can reduce this waste significantly. Yahav notes that enterprises operating with a centralized context layer have seen up to 2x higher success rates in task completion and an 80% reduction in unnecessary token consumption.

However, Yahav is candid about the challenges that remain. "The current ways in which we have to measure this are not sufficiently sophisticated—and this is true not just for us, but for the entire industry," he admitted. Measuring the velocity of a team assisted by AI requires a more nuanced approach than traditional software development metrics, as it must account for the quality of the output and the reduction in technical debt.

Implications for the Future of Work

The integration of context engines marks a shift in the evolution of AI from "chatbots" to "autonomous agents." As these systems become more integrated into the daily workflows of engineers and knowledge workers, the role of the context engine will expand. It will serve as the "corporate brain," a living repository of institutional knowledge that is updated in real-time.

The broader implication is that the competitive advantage in the AI era will not belong to the companies with the largest models, but to those with the best-organized internal data. Companies that successfully implement a context layer will be able to onboard AI agents as effectively as they onboard human employees, leading to a hybrid workforce where AI can safely and efficiently manipulate production-adjacent systems.

In conclusion, the path to a measurable return on AI investment lies in solving the context problem. By treating organizational knowledge as essential infrastructure and deploying it within a secure, governed perimeter, enterprises can finally move past the "95% failure rate" and begin to realize the transformative potential of generative AI at scale. The transition from a "Ferrari driving in circles" to a high-performance, map-guided system is the defining challenge for the next phase of enterprise digital transformation.