Navigating the Data Deluge A Strategic Framework for Enterprise Big Data Development and Sustainable Digital Growth

The global landscape of information technology has reached a critical inflection point where the mere accumulation of data no longer guarantees a competitive advantage; rather, the ability to architect sophisticated systems for its distillation has become the primary driver of institutional success. As organizations navigate an era defined by the exponential growth of digital footprints, the transition from passive data collection to active big data development has emerged as a mandatory evolution for survival in the modern economy. According to recent industry projections from Statista, the total volume of data created, captured, copied, and consumed worldwide is expected to surge to more than 180 zettabytes by 2025, representing a staggering increase from the 64 zettabytes recorded at the start of the decade. This deluge of information, generated by every web interaction, mobile transaction, and Internet of Things (IoT) sensor, presents both an unprecedented opportunity and a logistical crisis for the modern enterprise.

The Evolution of the Data-Driven Enterprise

Historically, the challenge for businesses was the high cost of digital storage and the limited processing power of legacy hardware. Throughout the late 20th century, data management was largely a clerical function, confined to structured relational databases that tracked basic inventory and financial records. However, the advent of the cloud computing era and the proliferation of social media and mobile connectivity shifted the paradigm. By the mid-2010s, "Big Data" had moved from a buzzword to a technical reality, characterized by the "Three Vs": Volume, Velocity, and Variety.

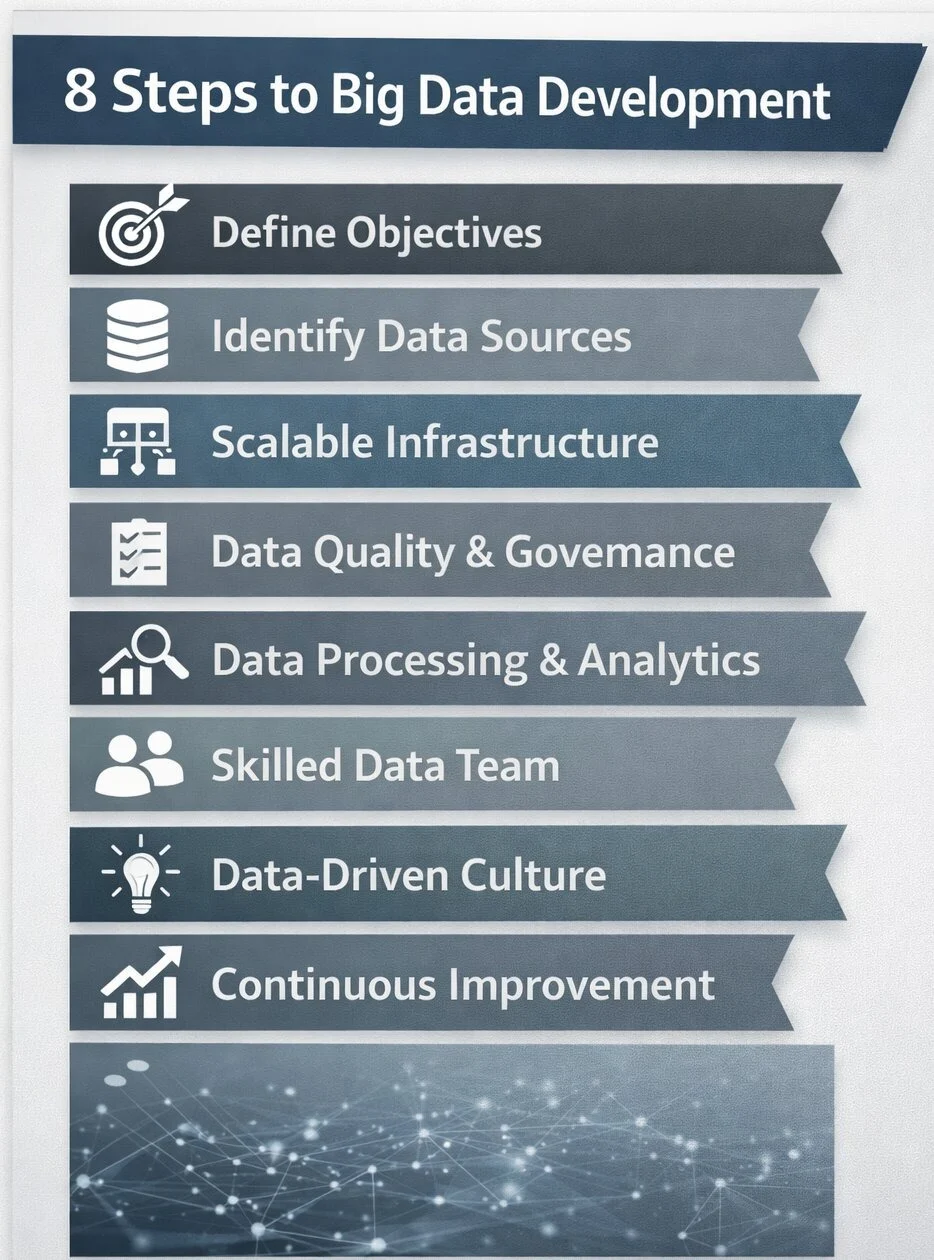

Today, the landscape has matured further. Organizations are no longer just dealing with "large" amounts of data; they are dealing with "complex" ecosystems where data is often fragmented across hybrid cloud environments and edge devices. The modern big data development strategy is, therefore, a multidisciplinary effort that integrates software engineering, statistical mathematics, and corporate strategy. Industry analysts suggest that while over 90% of large enterprises have invested in big data and AI initiatives, nearly 70% of these organizations still struggle to achieve a truly data-driven culture. This disconnect highlights the necessity of a structured, eight-step framework to bridge the gap between technical capability and business value.

Step 1: Defining Business Objectives and Key Performance Indicators

The most frequent cause of failure in big data initiatives is the "technology-first" fallacy—the assumption that deploying advanced tools will naturally reveal useful insights. Strategic big data development must instead begin with a rigorous definition of business problems. Whether the goal is to reduce customer churn, optimize supply chain logistics, or enhance fraud detection, the objective dictates the architecture.

Inverted logic—collecting data first and asking questions later—leads to "data swamps" where information becomes inaccessible and expensive to maintain. To avoid this, organizations must establish clear Key Performance Indicators (KPIs). For instance, a retail giant might measure the success of its data initiative by a 15% reduction in overstocking costs, while a financial institution might target a 20% improvement in the speed of loan approvals through automated risk assessment. By anchoring technical projects to financial and operational outcomes, data development becomes a measurable asset rather than a speculative expense.

Step 2: Identification and Integration of Critical Data Assets

Once objectives are established, the focus shifts to identifying the specific datasets required to meet those goals. Most modern enterprises suffer from data fragmentation, where valuable information is trapped in "silos"—isolated departments such as marketing, sales, or logistics that use incompatible software systems.

A successful strategy involves a comprehensive audit of internal sources, such as Customer Relationship Management (CRM) systems, Enterprise Resource Planning (ERP) tools, and web logs, as well as external sources like market sentiment data and demographic trends. The core task of big data development at this stage is the creation of a unified data environment. This often involves the implementation of Data Lakes or Data Warehouses that allow for the ingestion of both structured data (like SQL tables) and unstructured data (like social media posts or video files), providing a 360-degree view of the business landscape.

Step 3: Architecting Scalable and Resilient Infrastructure

Data volumes are rarely static; they are inherently dynamic. Infrastructure that functions efficiently today may buckle under the weight of tomorrow’s requirements. Consequently, scalability is the hallmark of professional big data development. Modern architectures often rely on distributed computing frameworks, such as Apache Spark or Hadoop, which allow workloads to be spread across multiple servers.

Furthermore, the shift toward cloud-native solutions—offered by providers such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud—has democratized access to high-performance computing. These platforms allow businesses to scale their storage and processing power up or down based on real-time demand, ensuring cost-efficiency. Security must be baked into this infrastructure from the ground up, incorporating end-to-end encryption and rigorous identity and access management (IAM) to protect sensitive corporate and consumer information.

Step 4: The Imperative of Data Quality and Governance

The principle of "garbage in, garbage out" remains the ultimate law of data science. Inaccurate, duplicate, or outdated data can lead to catastrophic business decisions. High-quality big data development requires a robust data cleansing process, where records are standardized, validated, and de-duplicated.

Beyond technical cleaning, organizations must implement data governance frameworks. Governance defines the policies for data ownership, privacy, and compliance with global regulations such as the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA). By maintaining detailed metadata—data about data—analysts can trace the lineage of information, ensuring that every insight is backed by a verifiable and ethical source.

Step 5: Advanced Processing and Analytical Transformation

Raw data is essentially noise; processing is what turns that noise into music. This stage involves the transformation of data into formats suitable for deep analysis. It includes filtering out irrelevant variables and aggregating data points to reveal broader trends.

Modern analytics has moved beyond descriptive reports (what happened) to predictive and prescriptive analytics (what will happen and what should we do about it). For example, a logistics company might use real-time traffic and weather data to prescribe the most fuel-efficient routes for its fleet. Data visualization tools play a vital role here, converting complex algorithmic outputs into intuitive dashboards that allow non-technical executives to grasp market shifts at a glance.

Step 6: Cultivating Multi-Disciplinary Talent and Skills

The human element remains the most significant bottleneck in the big data pipeline. There is currently a global shortage of skilled data engineers and scientists. Professional big data development requires a triad of expertise:

- Data Engineers: Who build the "pipes" and infrastructure.

- Data Scientists/Analysts: Who interpret the data and build models.

- Business Translators: Who bridge the gap between technical findings and executive strategy.

To combat the talent gap, forward-thinking companies are investing in internal "upskilling" programs, teaching existing staff the basics of data literacy. Additionally, many are turning to specialized third-party development services to accelerate their digital transformation without the overhead of massive internal hiring.

Step 7: Establishing a Data-Driven Corporate Culture

Technology and talent are insufficient if the corporate culture remains resistant to evidence-based decision-making. Historically, many organizations relied on the "HiPPO" method—Highest Paid Person’s Opinion. Transitioning to a data-driven culture requires leadership to champion analytics at every level.

Research indicates that companies that empower mid-level managers and frontline employees with data access are more agile and resilient. When data is democratized—made available to those who need it through user-friendly interfaces—it fosters a culture of experimentation and continuous improvement.

Step 8: Continuous Iteration and Lifecycle Management

Big data development is a journey, not a destination. As new technologies like Generative AI and Machine Learning evolve, data strategies must be updated. A "set it and forget it" mentality leads to technical debt and obsolescence.

Organizations must regularly audit their data systems to ensure they are still meeting the business goals defined in Step 1. This includes retiring old data that is no longer useful (to save costs and reduce liability) and integrating new data streams from emerging sources like 5G-enabled devices.

Broader Impact and Implications for the Global Economy

The implications of successful big data development extend far beyond individual corporate balance sheets. On a macroeconomic level, the efficient use of data is driving a "Fourth Industrial Revolution," characterized by hyper-personalization and unprecedented operational efficiency. In healthcare, big data is accelerating drug discovery and enabling personalized medicine based on genetic profiles. In the public sector, smart cities are using data to reduce energy consumption and improve emergency response times.

However, this transition also raises significant ethical concerns. The concentration of data power in the hands of a few large corporations and the potential for algorithmic bias are subjects of intense international debate. As businesses refine their data strategies, they must also grapple with their role as stewards of public trust.

In conclusion, the path to becoming a data-driven organization is fraught with technical and cultural hurdles. Yet, the cost of inaction is significantly higher. By following a structured approach—prioritizing business goals, ensuring data quality, and fostering a culture of literacy—enterprises can transform the overwhelming "data deluge" into a structured reservoir of insight. Those who master the art and science of big data development will not only survive the digital age but will define its future.