Generative Artificial Intelligence and Voxel-Based Landscapes Advancing 3D Terrain Synthesis Through Neural Tokenization

The landscape of procedural world generation is undergoing a fundamental shift as researchers move beyond traditional noise-based algorithms toward deep learning architectures capable of "dreaming" in three dimensions. In a recent development within the field of computational geometry and generative modeling, a new pipeline has been established to synthesize 3D Minecraft terrain using Vector Quantized Variational Autoencoders (VQ-VAE) and Transformer architectures. This approach seeks to replace the rigid, hard-coded noise functions—such as Perlin or Simplex noise—that have defined sandbox gaming for over a decade with a more fluid, semantically aware system of voxel generation.

Minecraft, a cultural phenomenon and a staple of voxel-based design, has historically relied on procedural generation to create its near-infinite worlds. In current iterations, the game utilizes complex noise functions to generate "chunks"—segments of the world measuring 16 by 16 blocks with a vertical span of 384 blocks. While effective, these traditional methods are limited by mathematical constraints that often struggle to replicate the nuanced "spatial grammar" of natural landscapes without extensive manual tuning. The newly proposed neural pipeline addresses these limitations by training a model to recognize and replicate the structural essence of 3D landscapes, effectively treating world-building as a linguistic problem where blocks and shapes form the vocabulary of a digital environment.

The Evolution of 3D Generative Modeling and Data Scarcity

The transition to 3D generative modeling presents a unique set of challenges that are not present in 2D image synthesis. As highlighted in a January 2026 report by Computerphile featuring researcher Lewis Stuart, the primary obstacle in the field is the lack of high-quality, labeled 3D datasets. While billions of images exist for training models like Stable Diffusion or Midjourney, structured 3D data is significantly harder to acquire and process.

Furthermore, the "curse of dimensionality" poses a massive computational hurdle. A standard 512×512 pixel image contains approximately 262,144 data points. However, a 3D model of the same resolution would require over 134 million voxels. This exponential increase in data density requires immense computational power and sophisticated compression techniques to remain feasible for modern hardware.

To circumvent the scarcity of 3D data, researchers have turned to Minecraft as the premier source of voxel-based terrain. By utilizing automated scripts to force the game engine to render thousands of unique chunks, developers have extracted high-fidelity region files that maintain semantic consistency. Unlike random 3D objects, these chunks represent continuous, flowing landscapes where the logic of the terrain—such as the way a river bed dips or a mountain peak rises—is preserved across boundaries. This dataset allows a model to learn the "physics" of terrain, understanding that stone typically supports grass and that water naturally settles in depressions.

Methodology and the Two-Stage Generative Pipeline

The project utilizes a two-stage architecture designed to condense the complexity of millions of blocks into a manageable "latent space." This process begins with data preprocessing, which involves several critical optimizations to handle the unique quirks of the Minecraft engine.

One significant observation in voxel modeling is the prevalence of "air" blocks. In a standard Minecraft chunk, a vast majority of the vertical space is empty. To optimize training, the vertical span was restricted to a specific range (y = 0 to 128), and the vocabulary of blocks was pruned to the top 30 most frequent types. This reduction ensures that the model focuses on the structural elements that define the terrain’s shape rather than obscure decorative blocks.

To combat class imbalance—where the sheer volume of air and stone blocks could lead the model to ignore rarer features like water or snow—a Weighted Cross-Entropy loss function was implemented. By scaling the loss based on the inverse log-frequency of each block, the model is penalized more heavily for failing to predict structural "minorities." This forces the network to treat a river bed or a snow cap as being just as important as the vast expanses of subterranean stone.

Stage 1: Vector Quantization and 3D Convolutions

The first stage of the pipeline employs a VQ-VAE to tokenize 3D space. The VQ-VAE functions by passing 3D voxel data through an encoder that compresses it into a "bottleneck" layer. In this layer, continuous neural signals are snapped to a fixed codebook of 512 unique learned 3D shapes. These "codewords" act as the building blocks of the terrain—similar to how a LEGO set uses specific brick shapes to build complex structures.

Crucially, this stage utilizes 3D convolutions. While standard 2D convolutions slide a kernel across height and width, 3D convolutions allow the model to learn kernels that move across the X, Y, and Z axes simultaneously. This is essential for maintaining structural integrity, as the relationship between a block and the one beneath it (providing gravitational support) is as vital as its relationship to the blocks beside it.

One technical hurdle addressed during this phase was the issue of "dead embeddings"—codewords in the codebook that the encoder never selects, leading to wasted model capacity. To solve this, the researchers implemented a reset mechanism where underutilized codewords are forcefully re-initialized using vectors from the current input batch, ensuring the model utilizes its full dictionary of shapes.

Stage 2: Spatial Grammar and Transformer Integration

Once the VQ-VAE has learned to represent chunks as a series of tokens, the second stage employs a Generative Pre-trained Transformer (GPT) to learn the arrangement of these tokens. The 3D world is essentially flattened into a 1D sequence, allowing the GPT to treat world generation as a language modeling task.

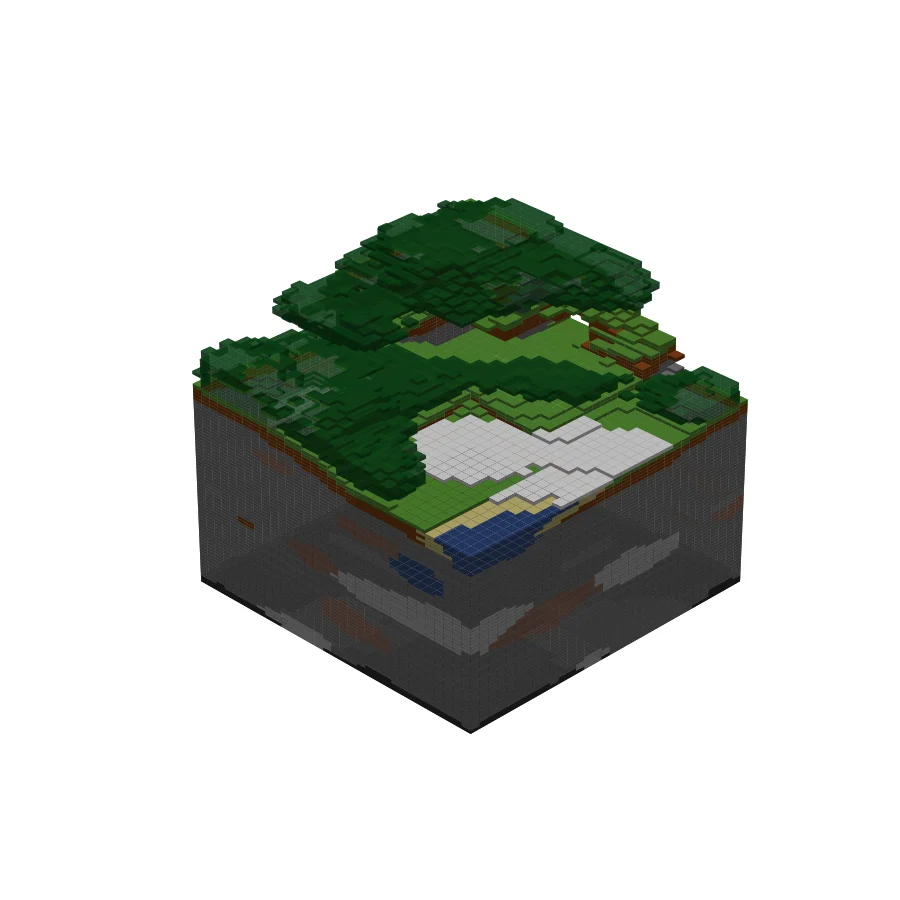

By observing sequences representing multiple chunks, the GPT learns the "spatial grammar" of the environment. It understands, for example, that if one chunk contains the beginning of a mountain range, the adjacent chunk should likely continue that elevation. During the inference phase, the model uses top-k sampling and temperature controls to generate a 256-token structural blueprint, which is then passed back through the VQ-VAE decoder to manifest as a 2×2 grid of recognizable terrain.

Analysis of Results and Semantic Consistency

The outputs of this neural pipeline demonstrate a high degree of semantic logic that often eludes traditional procedural generators. Analysis of the generated renders reveals several key successes:

- Clustering and Vegetation: The model successfully learned to cluster leaf blocks around wood blocks, mimicking the game’s tree structures rather than scattering them randomly.

- Climate Logic: The system demonstrated an understanding of altitudinal logic, placing snow blocks to cap stone and grass in a manner reflecting high-altitude tundras found in the training data.

- Hydrological Features: The model accurately placed water in depressions and bordered them with sand, indicating it had internalized the spatial logic of coastlines.

- Subterranean Complexity: Perhaps most notably, the use of 3D convolutions allowed the model to generate contiguous internal structures, including caves, overhangs, and cliffs, which are traditionally difficult to synchronize with surface terrain in noise-based systems.

While the results are highly recognizable, they are not perfect clones of the original game. The compression used by the VQ-VAE is "lossy," which occasionally results in "blurred" block boundaries or isolated floating blocks. However, the ability of the model to maintain structural integrity across multiple chunks is considered a significant milestone in 3D generative research.

Chronology of Project Development

The development of this voxel-based generative model followed a structured timeline reflecting the rapid pace of AI research in the mid-2020s:

- Initial Concept (Late 2025): The project was conceived as a response to the limitations of GAN-based approaches like ChunkGAN, aiming to leverage the superior sequence-modeling capabilities of Transformers.

- Data Extraction Phase (December 2025): Custom scripts were developed to harvest thousands of chunks from Minecraft Java Edition, creating a proprietary dataset of high-semantic consistency.

- Architecture Refinement (January 2026): Following the insights provided by the Computerphile report on 3D diffusion, the researchers pivoted to a VQ-VAE/Transformer hybrid to better handle the computational scale of voxels.

- Training and Optimization (February 2026): Implementation of Weighted Cross-Entropy loss and the "dead embedding" reset mechanism to ensure diversity in generated landscapes.

- Final Inference and Rendering (March 2026): Successful generation of the 2×2 chunk grids and validation of subterranean features.

Broader Implications for the Gaming Industry

The implications of "dreaming in voxels" extend far beyond the confines of a single game. As the industry moves toward "World-as-a-Model" (WaaM) frameworks, the ability to generate coherent 3D environments through neural networks could revolutionize game design.

For developers, this technology promises a shift from manual asset placement to high-level "biomerizing." Instead of coding specific rules for every environment, designers could provide prompts—such as "jagged peaks" or "eroded coastline"—and allow the model to synthesize a unique, structurally sound environment. This could drastically reduce the time and cost associated with creating massive open-world games.

Furthermore, this research contributes to the broader field of 3D AI, providing a roadmap for overcoming data scarcity through the use of simulated environments. As VQ-VAE and Transformer architectures continue to mature, the gap between "lossy" AI-generated shapes and pixel-perfect manual designs is expected to close, ushering in an era of truly infinite and intelligent digital worlds.

Future iterations of this work are expected to expand the vertical span to include the massive peaks and deep "cheese" caves characteristic of modern Minecraft versions. Additionally, scaling the codebook beyond 512 entries could allow the system to tokenize more complex, niche structures such as villages, temples, and intricate dungeon systems, further blurring the line between procedural generation and artificial creativity.