Python Decorators for Production Machine Learning Engineering

The transition of machine learning models from experimental Jupyter notebooks to high-availability production environments represents one of the most significant hurdles in modern software engineering. While a data scientist may focus on the accuracy of a model’s predictions, the machine learning engineer is tasked with ensuring that those predictions are delivered reliably, efficiently, and observably under real-world conditions. In this landscape, Python decorators have emerged as a vital architectural tool, offering a clean and modular way to inject operational resilience directly into inference pipelines without cluttering core algorithmic logic.

The Operational Reality of Production Machine Learning

In a research setting, a function failure is a minor inconvenience that requires a manual restart. In production, a failed function can lead to cascading system outages, corrupted downstream data, or significant financial loss. Modern machine learning systems are inherently distributed, often relying on a complex web of feature stores, vector databases, and remote API endpoints. Each of these touchpoints introduces a point of failure.

Industry data suggests that nearly 80% of machine learning projects struggle to reach the deployment phase, often due to the "hidden technical debt" associated with maintaining production-grade code. This debt manifests as repetitive boilerplate code for error handling, logging, and resource management. Python decorators—functions that wrap other functions to modify their behavior—provide a sophisticated solution to this problem by allowing engineers to separate "what" a function does from "how" it behaves in a production environment.

1. Resilience Through Automatic Retry and Exponential Backoff

One of the primary challenges in production ML is the volatility of external dependencies. Whether a system is calling a hosted LLM provider like OpenAI or fetching real-time user features from a Redis cluster, network timeouts and rate limits are inevitable. Implementing manual try-except blocks across dozens of functions is not only tedious but error-prone.

The implementation of a @retry decorator with exponential backoff has become a standard industry practice. This pattern does not merely attempt a failed operation again; it introduces a progressively increasing delay between attempts. This strategy is critical for preventing "thundering herd" problems, where multiple failing services hit a recovering dependency simultaneously, causing it to crash again.

From a journalistic perspective, the adoption of such patterns reflects a broader shift toward "chaos engineering" principles in ML. By anticipating failure and automating recovery, engineers can maintain high uptime even when upstream services are unstable. For mission-critical applications, such as fraud detection or autonomous navigation, these few lines of decorator code serve as a primary line of defense against system-wide latency spikes.

2. Proactive Defense with Input Validation and Schema Enforcement

Data quality remains the single largest cause of "silent" failures in machine learning. Unlike traditional software, where an incorrect input might cause an immediate crash, an ML model may ingest a null value or an incorrectly scaled feature and still produce a prediction—albeit a wildly inaccurate one. This phenomenon, known as training-serving skew, can lead to catastrophic business decisions if left unchecked.

The use of a @validate_input decorator allows engineers to enforce strict schemas before data ever reaches the model’s predict() method. By integrating with libraries like Pydantic or Great Expectations, these decorators can verify that incoming NumPy arrays or tensors match the expected dimensions, data types, and statistical ranges.

Chronologically, the industry has moved from post-hoc monitoring (detecting errors after they occur) to proactive enforcement. By rejecting malformed data at the "gate" of the function, companies can prevent corrupted data from entering their data lakes and polluting future training sets. This proactive stance is essential for compliance in regulated industries like finance and healthcare, where data provenance and integrity are legal requirements.

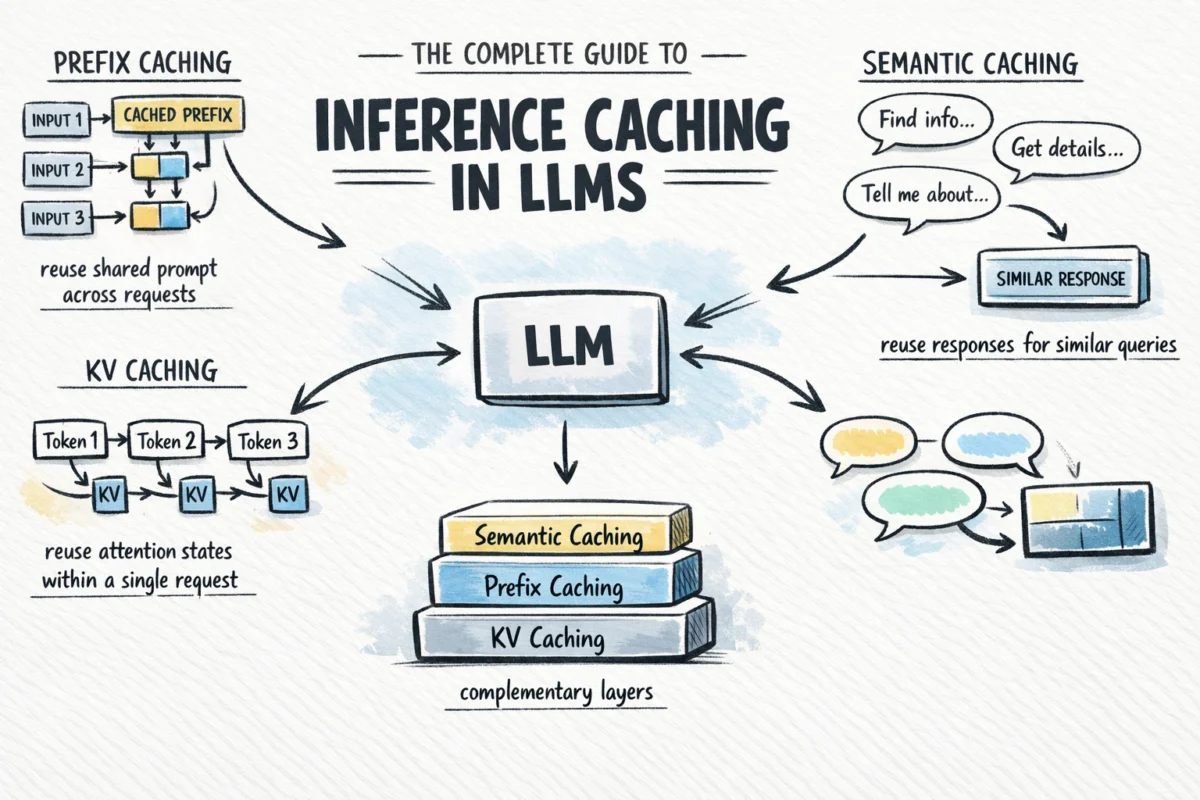

3. Optimizing Efficiency with Result Caching and TTL

The computational cost of machine learning inference is an order of magnitude higher than standard CRUD (Create, Read, Update, Delete) operations. In a high-traffic environment, redundant computations represent both a latency bottleneck and a significant cloud expenditure. If a recommendation engine receives ten identical requests for the same user ID within a single minute, recalculating the embeddings ten times is an inefficient use of GPU resources.

A @cache_result decorator with a Time-To-Live (TTL) parameter addresses this by storing the output of a function based on a hash of its inputs. The inclusion of a TTL is what makes this pattern suitable for production. In dynamic environments, data becomes "stale." A prediction made five minutes ago may no longer be valid if the user’s recent behavior has changed.

The strategic implication of intelligent caching is profound. For many enterprises, implementing even a 30-second cache on high-frequency endpoints has resulted in a 40% to 60% reduction in compute costs. This allows organizations to scale their AI offerings without a linear increase in their cloud service provider bills.

4. Memory-Aware Execution in Constrained Environments

Machine learning models, particularly Large Language Models (LLMs) and deep neural networks, are notorious for their high memory footprints. In containerized environments like Kubernetes, exceeding a memory limit results in an immediate "OOMKill" (Out Of Memory Kill), which terminates the entire service. This is particularly problematic during batch processing or when multiple models are co-located on the same hardware.

The @memory_guard decorator acts as a tactical supervisor. By utilizing libraries such as psutil, the decorator can inspect the system’s current RAM utilization before allowing a memory-intensive function to execute. If the system is near its threshold, the decorator can take several actions: trigger Python’s garbage collector, log a high-priority alert to the DevOps team, or implement a "circuit breaker" that pauses execution until resources are freed.

This level of granular control is a departure from traditional "black-box" execution. It allows ML systems to degrade gracefully. Instead of the entire service crashing, the system might skip a non-essential background task or return a cached result, maintaining the core user experience during periods of high resource contention.

5. Unified Observability and Execution Monitoring

The final pillar of production ML engineering is observability. When a model behaves unexpectedly, engineers need to know more than just the fact that an error occurred. They need to know the execution time, the specific input features that caused the anomaly, and the state of the system at the time of the call.

An @monitor decorator provides a standardized way to collect this metadata. Rather than relying on developers to remember to add logging statements to every new function, the decorator automatically captures start and end times, logs any exceptions with their full stack traces, and can even push metrics to platforms like Prometheus or Datadog.

The broader impact of this approach is the democratization of debugging. When every function in an inference pipeline is wrapped in a consistent monitoring decorator, the resulting logs are structured and searchable. This allows for the use of automated anomaly detection on the logs themselves, identifying performance regressions that might be too subtle for human observers to notice.

Chronology of Development: From Research to Reliability

The evolution of these decorator patterns mirrors the broader history of machine learning. In the early 2010s, the focus was primarily on algorithmic breakthroughs. Code was often "one-off" and designed for local execution. As the industry moved into the "MLOps" era (circa 2017 to present), the focus shifted toward the lifecycle of the model.

- The Experimental Phase: Models were manually exported and wrapped in basic Flask or Django apps.

- The Integration Phase: Engineers realized that "code rot" and data drift were breaking models in production.

- The Standardization Phase: Patterns like the five decorators discussed here became recognized as "best practices" for building resilient, enterprise-grade AI.

Analysis of Implications for the AI Industry

The adoption of these Python-native engineering patterns signifies the maturation of the artificial intelligence field. We are moving away from a period where "AI" was treated as a special, fragile exception to standard software engineering rules. Today, the expectation is that ML code must be as robust as the core banking systems or e-commerce engines it supports.

For organizations, the implementation of these decorators leads to a measurable increase in "Engineering Velocity." Because operational concerns like retries and logging are handled by decorators, data scientists can focus on improving model architectures while engineers can manage the infrastructure with confidence.

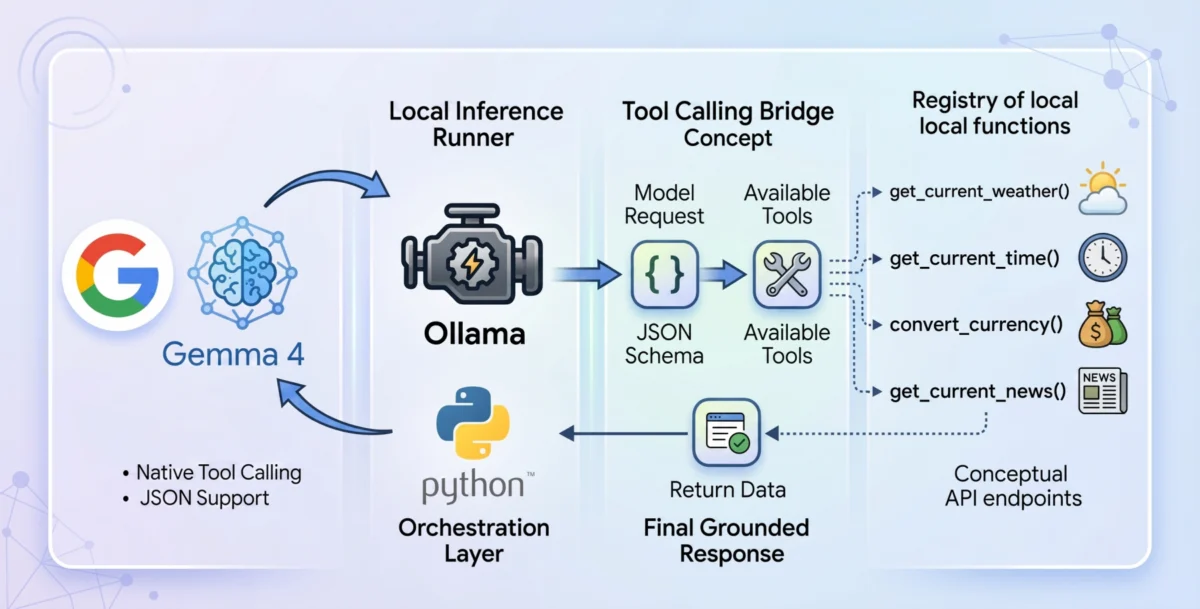

Furthermore, as the industry moves toward "Agentic AI"—where models take actions in the real world—the need for these guards becomes even more critical. A retry decorator isn’t just about efficiency; it’s about ensuring a digital agent doesn’t give up on a task due to a momentary flicker in a Wi-Fi signal. An input validation decorator is the difference between an AI correctly processing a transaction and an AI misinterpreting a decimal point and causing a financial error.

In conclusion, while the core of machine learning remains rooted in mathematics and statistics, the "last mile" of production is purely an engineering challenge. Python decorators provide an elegant, powerful, and scalable way to bridge this gap, turning experimental scripts into resilient, production-ready systems. As AI continues to permeate every sector of the global economy, these patterns will likely evolve from "best practices" to the fundamental requirements of the trade.