The Future of Large Language Models Overcoming the Bottleneck of Context Rot and the Rise of Recursive Architectures

The rapid evolution of artificial intelligence has been defined by a singular, relentless pursuit: the expansion of the context window. From the early days of Generative Pre-trained Transformer (GPT) models, which could only process a few thousand words at a time, to the modern era of Google’s Gemini 1.5 Pro and Anthropic’s Claude 3.5, which boast capacities ranging from 200,000 to two million tokens, the industry has operated under the assumption that more space equals more intelligence. However, as these windows expand, a fundamental architectural bottleneck has emerged, revealing that the way large language models (LLMs) process information is inherently limited by a "single forward pass" design. This limitation has given rise to a phenomenon known in research circles as "context rot," where the quality of AI output degrades as the volume of input increases, leading to a shift toward recursive language models that navigate information rather than simply absorbing it.

The Engineering Paradox of the Context Window

At the heart of every modern LLM lies the Transformer architecture, a design that relies on an attention mechanism to weigh the importance of different words in a sequence. When a user provides a prompt, the model receives all the information—documents, conversation history, instructions, and examples—simultaneously. It then processes this data in one comprehensive pass to generate a response. This means that the context window serves as both the model’s total worldview and its only active working memory.

While increasing the size of this window allows for the inclusion of massive datasets, such as entire legal codebases or multi-volume novels, the underlying architecture remains static. The model must distribute its "attention" across every token in the window. As the window fills, the attention mechanism is stretched thin. This creates a predictable yet frustrating set of failure modes. In sparse contexts, models are remarkably sharp and accurate. However, as the context nears its limit, relevant information often becomes "lost in the middle," contradictions between different parts of the text go unresolved, and the model begins to hallucinate or ignore specific instructions.

The Phenomenon of Context Rot

The term "context rot" has become a colloquialism among AI researchers to describe the non-catastrophic but progressive degradation of output quality. Unlike a system crash or a total failure, context rot is insidious. It manifests as a subtle loss of nuance, a tendency to favor information at the very beginning or very end of a prompt (the primacy and recency effects), and a decrease in the logical coherence of long-form reasoning.

Data from recent benchmarks, such as the "Needle In A Haystack" test, illustrate this problem. In these tests, a specific, unrelated piece of information (the "needle") is placed at various points within a massive document (the "haystack"). While models like GPT-4 Turbo and Claude 3 show high retrieval accuracy when the needle is at the beginning or end, their performance frequently dips when the information is buried in the middle 50% of the context. This degradation proves that even if a model can see two million tokens, it does not necessarily understand or prioritize them with equal efficiency.

A Chronology of the Context Race

The journey toward the current bottleneck began in 2017 with the publication of the seminal paper "Attention Is All You Need." Since then, the industry has followed a clear trajectory:

- 2017–2019: The Foundation. Early models like BERT and the original GPT featured context windows of 512 to 1,024 tokens, barely enough for a few paragraphs of text.

- 2020: The GPT-3 Era. OpenAI’s GPT-3 expanded the window to 2,048 tokens, allowing for basic multi-turn conversations and short article summaries.

- 2022: The Proliferation. Models began reaching 4,000 to 8,000 tokens, enabling more complex coding tasks and deeper conversational memory.

- 2023: The Great Expansion. Anthropic released Claude with a 100,000-token window, followed quickly by OpenAI’s GPT-4 Turbo with 128,000 tokens. This was the first time entire books could be fed into a model.

- 2024: The Era of Millions. Google DeepMind announced Gemini 1.5 Pro, featuring a context window of up to two million tokens. Despite this massive leap, developers began reporting the first significant instances of context rot in production environments.

Technical Constraints and Supporting Data

The primary reason for context rot is the quadratic complexity of the standard attention mechanism. The computational resources required to process a prompt grow at the square of the sequence length ($O(n^2)$). For a model to attend to every token in a two-million-token window, the mathematical overhead becomes staggering.

Furthermore, the "Softmax" function used in attention mechanisms tends to squash smaller values toward zero. In a massive context window, the "signal" of a single relevant sentence is often drowned out by the "noise" of thousands of irrelevant sentences. According to a 2023 study by researchers at Stanford, UC Berkeley, and Samaya AI titled "Lost in the Middle: How Language Models Use Long Contexts," the ability of LLMs to use information decreases significantly when that information is located in the middle of the input context. The study found that performance was U-shaped: high at the start and end, but poor in the center.

The Shift Toward Recursive Language Models

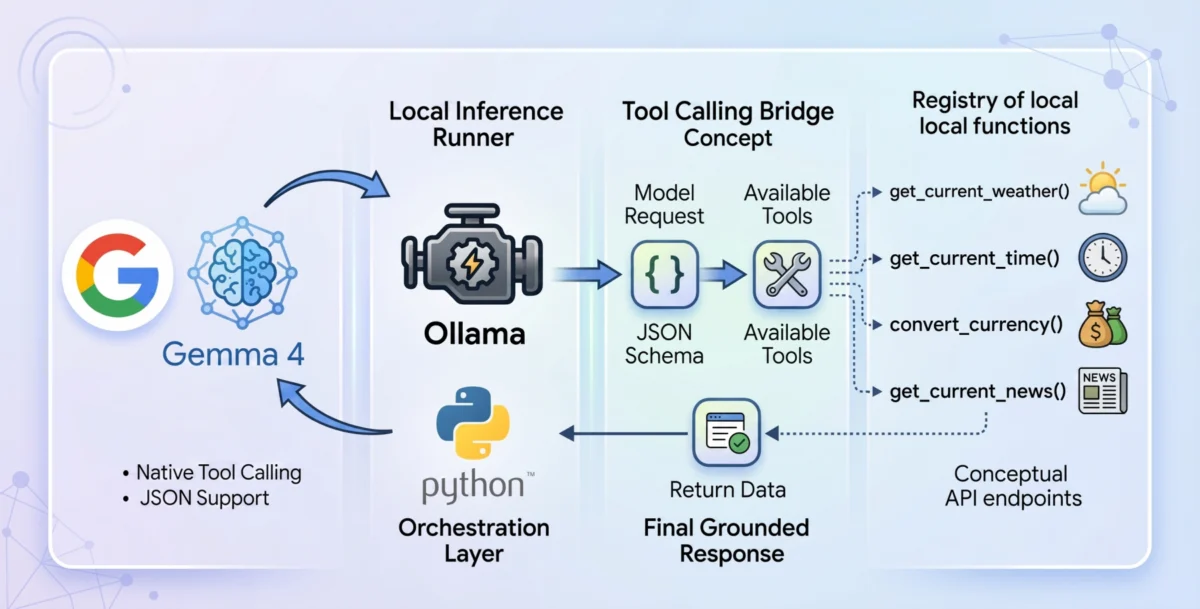

Recognizing that brute-force expansion of the context window is reaching a point of diminishing returns, a new research direction is gaining momentum: recursive language models. Rather than attempting to load every piece of data into a single forward pass, these systems are designed to navigate to the information they need.

The principles of recursive modeling involve the AI acting as an agent that can query its own memory, search external databases, and summarize its own thought processes before taking the next step. This is often implemented through "Agentic RAG" (Retrieval-Augmented Generation) or "MemGPT" architectures. Instead of a 100,000-word document being shoved into the context window, the model maintains a "scratchpad" or a hierarchical index. When asked a question, the model performs a recursive search: it looks at an index, identifies the relevant chapter, navigates to the specific paragraph, and only then brings that information into its active context.

This architectural shift moves the AI from a "passive reader" to an "active navigator." It mirrors human cognition; a researcher does not hold every word of a 500-page dissertation in their active consciousness but instead uses an index and citations to navigate to the necessary data points.

Industry Reactions and Statements

The shift toward navigation-based AI has prompted significant discussion among industry leaders. Engineers at firms like LangChain and LlamaIndex have noted that the "context window race" may be a distraction from the real goal of architectural efficiency.

"The industry is starting to realize that a million-token context window is a powerful tool, but it isn’t a replacement for sophisticated data retrieval and reasoning loops," stated one senior AI architect during a recent developer conference. "We are seeing a move away from ‘throw it all in the prompt’ toward ‘structured, multi-step reasoning.’"

Official documentation from major AI labs has also begun to include warnings about context density. Anthropic, for instance, provides extensive guides on "prompt engineering" specifically to mitigate the loss of information in long contexts, suggesting that users place the most critical instructions at the very end of the prompt to take advantage of the recency effect.

Broader Impact and Implications for the Future

The move toward recursive and navigational models has profound implications for the future of AI development. First, it addresses the economic and environmental costs of AI. Processing a two-million-token prompt is incredibly expensive in terms of GPU compute and electricity. If a recursive model can achieve the same result by only "attending" to 2,000 relevant tokens at a time, the cost of AI operations could drop by orders of magnitude.

Second, it paves the way for "infinite context" agents. If a model is not limited by what it can fit in a single forward pass, but rather by how well it can navigate a database, then the effective context of the AI becomes the size of the entire internet or a corporation’s entire internal server.

Finally, this shift marks a transition in the philosophy of Artificial General Intelligence (AGI). We are moving away from the idea of AI as a static "oracle" that knows everything at once, toward AI as a dynamic "worker" that knows how to find, verify, and synthesize information. While the experimental forms of recursive models are still being refined in the lab, their influence is already visible in the agentic workflows being deployed in software engineering, legal analysis, and medical research.

In conclusion, while the expansion of context windows has been a remarkable feat of engineering, it has revealed a fundamental truth: more data does not always lead to better decisions. The battle against context rot is driving the next great leap in AI architecture—one where the ability to navigate and reason over information is valued more highly than the ability to simply store it. The era of the "single forward pass" may soon give way to a more fluid, recursive future.