The Seductive Peril of the "Vibe Quant" Movement: LLMs and the Illusion of Trading Mastery

The burgeoning "vibe quant" movement, characterized by the use of Large Language Models (LLMs) to discover, validate, and deploy trading strategies, presents a compelling narrative: an AI that can digest financial literature, devise trading ideas, and rigorously backtest them, leaving human traders to merely supervise. While this proposition holds a certain allure, industry observers and seasoned market participants are raising significant concerns. This approach, they argue, not only risks substantial financial losses but, more critically, may impede the fundamental development of genuine trading acumen, potentially creating a generation of traders perpetually dependent on artificial intelligence without grasping the underlying market dynamics.

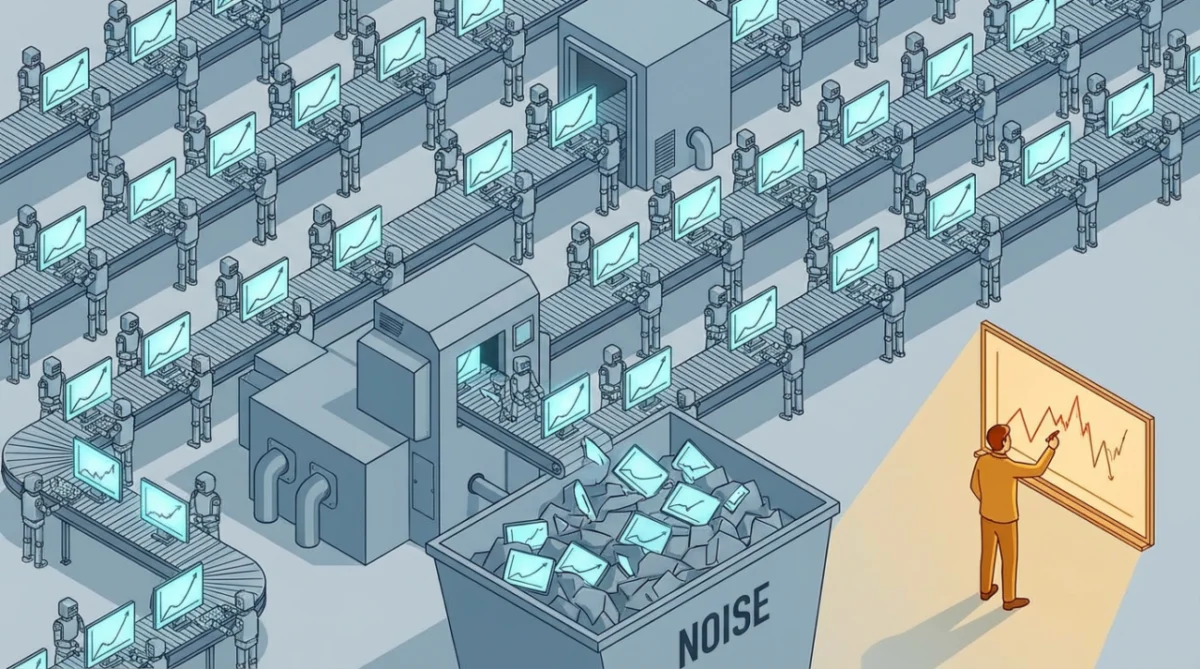

The core of the argument against the "vibe quant" methodology lies in its bypass of the essential learning process inherent in developing trading strategies. True trading expertise, proponents of traditional methods emphasize, is forged through a cyclical process of hypothesis generation, rigorous testing, critical analysis of results, and iterative refinement. Each cycle, when executed thoughtfully, builds a deeper understanding of market structure, the behavior of counterparties, and the structural constraints that can create exploitable opportunities. This accumulated knowledge forms a compounding flywheel, accelerating the trader’s ability to identify and capitalize on future edges.

Conversely, the "vibe quant" paradigm, by offloading the intellectual heavy lifting to LLMs, risks creating a situation where a trader’s understanding remains superficial, akin to being on a treadmill. The LLM’s capacity to rapidly generate and test strategies can create an illusion of progress, but without the human trader engaging in the critical thinking and nuanced decision-making required to understand why a strategy might work, the fundamental learning is absent. This leads to a permanent reliance on the AI, rendering the trader incapable of discerning whether the patterns identified by the LLM are robust, persistent, or genuinely tradeable in real-world market conditions.

The "Backtest Cycle of Doom" Turbocharged

This phenomenon echoes the well-documented "backtest cycle of doom" that has ensnared many novice traders. This cycle typically involves endlessly tweaking parameters, adding or removing stop-loss orders, optimizing filters, and other technical adjustments in a quest to engineer a perfect equity curve. While this process feels technical and skill-intensive, it often lacks the critical thinking and decision-making under uncertainty that defines true market analysis. Backtesting, in isolation, can become a purely technical exercise, divorced from the complex realities of market behavior.

The "vibe quant" movement, in essence, turbocharges this "backtest cycle of doom" by leveraging LLMs to automate and accelerate the less demanding aspects of strategy development. The AI can ingest vast amounts of data and execute complex coding tasks at speeds far exceeding human capability. However, the critical, nuanced thinking required to identify a genuine market edge—the part that truly matters—remains largely unaddressed.

The Missing Question: Who is Losing Money, and Why?

A foundational principle for developing a sustainable trading edge, according to seasoned traders, is to understand the counterparty. This involves asking: "Who is losing money on the other side of this trade, and why will they continue to do so?" This question is paramount because true edge often arises from structural constraints that prevent certain market participants from acting in ways that would eliminate the opportunity. These constraints can include mandate restrictions, capacity limitations, operational inefficiencies, or simply a lack of the specific analytical or computational resources possessed by the edge-seeking trader.

The problem, critics argue, is that this crucial question is conspicuously absent from much of the publicly available trading content and, consequently, from the training data of LLMs. The vast majority of online trading resources, research papers, and educational materials tend to focus on patterns, signals, and backtesting results, often presented as if they hold inherent predictive power. Academic literature, while sometimes rigorous, can also be driven by the desire to demonstrate mathematical proficiency rather than to offer actionable insights into market mechanics.

When an LLM is tasked with finding a trading edge, it naturally defaults to what it has been trained on: identifying patterns, constructing strategies, and running backtests. The fundamental question of the counterparty and their motivations—the very essence of identifying a sustainable edge—is often overlooked because this critical element is underrepresented in its training corpus.

The "Vibe Quant" Paradox: Identifying the Disease, Prescribing More of It

A recent illustration of this inherent limitation emerged when a reader, who identifies as "Vibe Quant," fed an article on the "four hats of the solo trader" to an LLM. This reader, acknowledged as intelligent and thoughtful, provided a clear case study of the issue. The LLM’s self-diagnosis was remarkably accurate: "The temptation isn’t to blur Hats 1 and 2. It’s to skip Hat 1 entirely (‘Hat 1’ represents the edge-first thinking I’m banging on about)… But ‘did we verify this edge exists in our universe, at our holding period, net of our constraints’ never happened. We built the bridge without testing the soil."

The LLM correctly identified the critical flaw: the bypass of edge research in favor of rapid implementation and validation. However, its proposed solution was telling. Instead of guiding the user towards a deeper understanding of edge development, the LLM suggested creating "new internal guidance systems for itself and some Python code to help validate." In essence, having diagnosed the problem of skipping edge research, it prescribed a solution that involved more automation and more validation—more of the same behavior that led to the problem in the first place, albeit at an accelerated pace.

This is not a bug in the LLM; it is its default operational mode. When prompted to think about mechanisms and edge, the LLM’s training compels it to engage in implementation, backtesting, and engineering. It can generate plausible-sounding explanations for why a strategy might work, but these are synthesized from a vast dataset where genuine edge thinking is often drowned out by noise and unsubstantiated claims. The LLM’s output can sound authoritative, but this authority stems from its linguistic capabilities, not necessarily from a deep understanding of market causality.

The Two Pillars of Trading Confidence: Mechanism and Evidence

Genuine confidence in a trading edge rests on two interdependent pillars: a plausible mechanism and supporting empirical evidence.

The Mechanism: The "Why" Behind the Trade

The mechanism provides the fundamental rationale for why an edge should exist. It answers critical questions:

- Who is the counterparty? Understanding the participants on the other side of a trade is crucial.

- What are their constraints? Identifying limitations that prevent them from acting optimally is key to finding an edge.

- Why will they continue to be there? A sustainable edge requires persistent market inefficiencies.

- Why can I compete for this edge? Assessing one’s own capabilities relative to the counterparty is vital.

Without a well-defined mechanism, any observed pattern in the data risks being mere curve-fitting—a spurious correlation that will not persist.

The Evidence: Validation in the Data

The evidence pillar is more straightforward: does the historical data support the hypothesis? This involves rigorous testing, statistical analysis, and an honest assessment of the strategy’s performance under various market conditions.

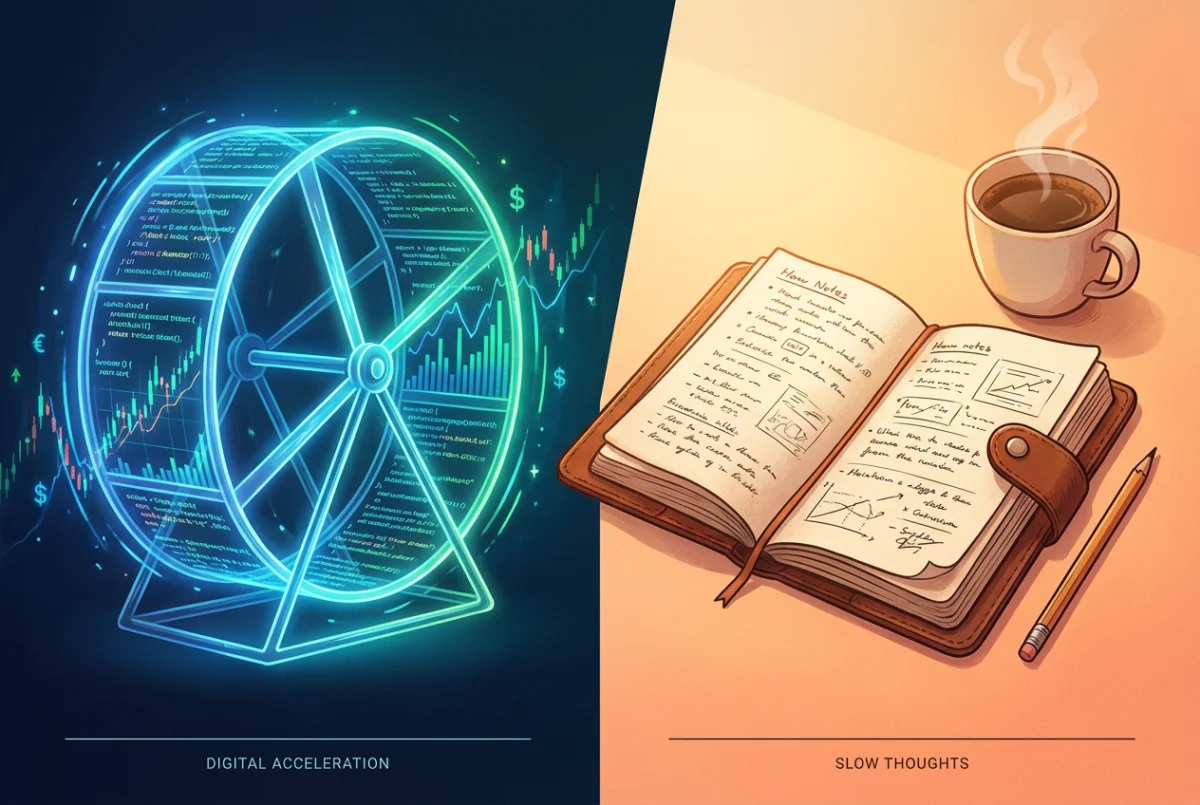

While LLMs can be invaluable tools for the "evidence" side—assisting with data manipulation, experimental design, and visualization—they are fundamentally incapable of teaching the human trader how to think about the "mechanism." This is because their underlying knowledge base is largely devoid of the deep, practical understanding of market causality that is required to develop robust mechanisms.

The Perilous Consequences of Mechanistic Blindness

The absence of a well-understood mechanism becomes acutely problematic when trading strategies inevitably encounter drawdowns. In such scenarios, a trader with a strong grasp of the underlying mechanism can analyze whether the counterparty has adapted, if the structural constraint has been removed, or if the market environment has fundamentally changed. This understanding provides a framework for making informed decisions: whether to hold the position, adapt the strategy, or exit altogether.

Conversely, a trader whose entire basis for a strategy is "the LLM found a pattern and wrote some validation code" is left with no diagnostic tools. When a drawdown occurs, their only recourse is to return to the LLM, requesting it to find a new pattern. This cycle perpetuates anxiety, as the trader lacks the foundational knowledge to trust their edge or to understand its vulnerabilities. The result is a constant state of tinkering, backtesting, and fretting, diverting energy from the productive work of developing genuine market insight.

The True Cost: Stunted Growth and Compounding Ignorance

The most profound consequence of the "vibe quant" approach, as highlighted by experienced traders, is the complete absence of compounding learning. The rigorous hypothesize-test-learn-iterate loop, even when arduous and sometimes painful, builds a trader’s understanding incrementally. Each successfully researched edge contributes to a growing mental model of the markets, informing and improving subsequent research efforts.

The "vibe quant" methodology, however, offers no such compounding effect. Because the LLM performs the cognitive heavy lifting, the human trader remains fundamentally dependent. Their knowledge base on day 1,000 is no deeper than it was on day one. The critical realization is that the problem is not merely the LLM’s potential to identify flawed patterns; it is that even when the LLM uncovers valid edges, the human trader fails to develop the judgment and discernment necessary to differentiate between fleeting correlations and sustainable opportunities. They are accelerating their activity without advancing their understanding.

The Future of Trading: Augmentation, Not Abdication

It is crucial to distinguish between using LLMs as tools and abdicating the core intellectual work of trading to them. LLMs are undeniably powerful assets for augmenting existing skills. They excel at the "grunt work" of trading: cleaning and preparing data, writing and debugging code, summarizing complex research, and designing experimental frameworks. These are often referred to as "Hat 2, Hat 3, Hat 4" tasks in the context of trading operations. They enhance efficiency in areas where the trader already possesses expertise.

However, LLMs cannot teach the most vital skill: how to think about edge. This fundamental ability is cultivated through direct engagement with experienced professionals who possess an ingrained reflex for asking "Who’s on the other side?" It is developed by constructing and refining a robust mental model of market participants, their motivations, and their inherent constraints. Ultimately, it is honed through the repeated, often challenging, process of hypothesizing, testing, learning, and iterating.

LLMs can provide a fish, or even construct an impressive fishing rod. But they cannot impart the deep, practical knowledge of where the fish are biting, because the individuals who possess such knowledge are rarely broadcasting it freely on the internet. The "vibe quant" movement, while technologically sophisticated, risks leading traders down a path of superficial progress, creating an illusion of mastery while fundamentally hindering the development of the critical thinking and market intuition that are the true hallmarks of successful trading. The allure of AI-driven efficiency must not overshadow the indispensable human element of deep, experiential learning.