AI’s Evolving Role in Uncovering Zero-Day Vulnerabilities: A Shifting Cybersecurity Landscape

The cybersecurity industry is witnessing a profound transformation driven by the rapid advancement of artificial intelligence, particularly in its capacity to discover zero-day vulnerabilities. While previously the domain of highly specialized, non-public research, commercial and open-source AI models are now demonstrating significant progress in identifying previously unknown software flaws, a development that carries substantial implications for both defense and offense in the digital realm. Forescout’s Verde Labs has published research indicating a dramatic improvement in AI’s ability to perform vulnerability research and even develop exploits, raising critical questions about the future of software security and the accessibility of sophisticated cyberattack tools.

The Accelerating Pace of AI-Driven Vulnerability Discovery

Just a year ago, the landscape of AI in cybersecurity vulnerability research was nascent. According to Forescout’s Verde Labs, a staggering 55% of tested AI models failed basic vulnerability research tasks, and an even more discouraging 93% were unable to perform exploit development. These figures painted a picture of AI as a tool largely incapable of independently identifying and weaponizing software weaknesses. However, the pace of change has been nothing short of remarkable. Forescout’s latest assessment, conducted in late 2023 and early 2024, reveals a dramatically altered scenario. By 2026, the firm projects that all tested AI models will be capable of completing comprehensive vulnerability research tasks. More alarmingly, half of these models are expected to autonomously generate working exploits, significantly lowering the technical barrier to entry for individuals seeking to develop cyberattacks.

The research involved a rigorous evaluation of 50 AI models, encompassing commercial, open-source, and even models sourced from the underground cybercrime ecosystem. This broad scope was crucial in understanding the diverse capabilities and potential applications of AI across the spectrum of cybersecurity actors. The findings indicate that the most sophisticated models tested, specifically Claude Opus 4.6 and Kimi K2.5, have reached a level of proficiency where they can identify and exploit vulnerabilities without the need for complex, expertly crafted prompts. This accessibility means that even attackers with limited technical expertise can potentially leverage these advanced AI tools to discover and exploit software weaknesses.

Rik Ferguson, VP of Security Intelligence at Forescout, highlighted the significance of these advancements. "These are widely available AI models exceeding human capability," he stated, acknowledging that while these commercial models might not yet match the sheer scale, speed, and quality of specialized, non-public systems like Anthropic’s Claude Mythos (which has been shown to identify thousands of zero-day vulnerabilities), their accessibility and growing competence are nonetheless transformative. The implication is clear: AI is no longer just an assistive tool; it is rapidly becoming a primary driver in the discovery of novel security flaws.

The RAPTOR Framework and Real-World Discoveries

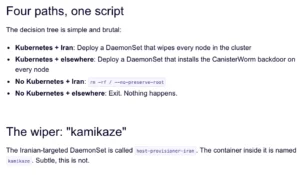

Forescout’s research was not purely theoretical. During their testing, the firm utilized a combination of single prompts, the open-source RAPTOR agentic framework, and their own proprietary extensions to uncover concrete evidence of AI’s prowess. This approach led to the discovery of four new zero-day vulnerabilities within OpenNDS, a widely deployed network device firmware. The RAPTOR framework, described as an open-source, agentic AI system designed for cybersecurity research, offense, and defense, acts as an orchestrator, enabling AI models to perform complex sequences of actions.

One particularly noteworthy discovery involved a vulnerability within code that Verde Labs had previously subjected to manual analysis without success. This underscores AI’s potential to uncover flaws that human analysts, despite their expertise, might overlook due to the sheer volume of code or the subtle nature of the bug. Ferguson elaborated on this point, emphasizing that the AI’s ability to explore code paths and identify anomalous behaviors can surpass human cognitive limitations in certain contexts.

Lowering the Barrier to Entry for Vulnerability Exploitation

The implications of AI-powered vulnerability discovery extend beyond the technical capabilities of the models themselves. The cost and accessibility of these tools are also critical factors. Forescout’s analysis revealed that while commercial models like Claude Opus 4.6 demonstrated the strongest performance in their tests, they come with a significant price tag, with costs potentially reaching $25 per million output tokens. This economic barrier, while substantial, is still considerably lower than the cost of employing specialized human security researchers.

On the other hand, open-source alternatives are rapidly becoming viable options for a wider range of users. Models such as DeepSeek 3.2 are capable of handling basic vulnerability research tasks at a fraction of the cost, with all tested tasks in Forescout’s evaluation costing less than $0.70. This democratizes access to sophisticated vulnerability research tools, making them available not only to large cybersecurity firms and well-funded organizations but also to smaller entities, independent researchers, and potentially, less scrupulous actors.

For context, Anthropic’s Claude Mythos, while not directly evaluated in Forescout’s report for cost-effectiveness in this specific study, is slated to be available to participants at $25 per million input tokens and $125 per million output tokens. This pricing positions it as a premium offering, likely for advanced research and development, but still within the realm of commercial accessibility.

The emergence of a tiered approach to AI model utilization, where users select models based on task complexity and cost, is becoming an increasingly practical strategy for both defenders and attackers. Organizations can leverage more affordable open-source models for routine scanning and initial analysis, reserving more powerful, albeit expensive, commercial models for deeper dives or critical threat hunting. Conversely, attackers can employ similar strategies, optimizing their resource allocation for maximum impact.

The Inevitable Infiltration of Unknown Vulnerabilities

Forescout’s overarching conclusion is a stark warning: organizations must operate under the assumption that their environments contain unknown vulnerabilities that AI will inevitably discover. This is amplified by the fact that not only can advanced commercial models uncover these flaws, but the research also suggests that open-source models can achieve similar breakthroughs. When combined with large-scale initiatives like Project Glasswing, which aims to surface thousands of zero-days in critical software, the threat landscape becomes undeniably complex. The question is no longer if unknown vulnerabilities will be found, but when and by whom.

The report implicitly suggests that the traditional reliance on human-driven vulnerability discovery and patching cycles may become insufficient in the face of AI’s accelerating capabilities. The speed at which AI can scan, analyze, and potentially exploit code could outpace the ability of organizations to detect and remediate these threats. This necessitates a paradigm shift in cybersecurity strategy, moving towards more proactive, resilient, and AI-aware defense mechanisms.

Background and Context: The Evolution of AI in Cybersecurity

The journey of AI in cybersecurity has been a gradual one, marked by distinct phases. Initially, AI was primarily employed for anomaly detection, behavioral analysis, and threat intelligence correlation. Machine learning algorithms were trained on vast datasets of network traffic and system logs to identify patterns indicative of malicious activity. This phase was characterized by AI as an assistant, augmenting human analysts by sifting through massive amounts of data.

The next evolutionary step saw AI being applied to more proactive tasks, such as identifying potential vulnerabilities in code through static and dynamic analysis. However, these early applications were often limited by their ability to understand complex code structures or to generate actionable exploit code. The breakthrough in AI’s generative capabilities, particularly with large language models (LLMs), has been the catalyst for the current rapid advancement in vulnerability discovery. LLMs, trained on massive amounts of text and code, have developed a sophisticated understanding of programming languages and software architecture, enabling them to reason about code, predict potential flaws, and even generate functional exploit snippets.

The development of agentic AI frameworks, such as RAPTOR, further amplifies these capabilities. Agentic AI refers to AI systems that can act autonomously to achieve goals, breaking down complex tasks into smaller, manageable steps and executing them without constant human intervention. In the context of vulnerability research, an agentic AI could be tasked with finding a specific type of vulnerability in a target system, then autonomously explore the codebase, identify a potential flaw, develop a proof-of-concept exploit, and even attempt to gain unauthorized access.

Timeline of AI Advancements in Vulnerability Research (Illustrative)

- Early 2010s: Initial applications of machine learning for anomaly detection and threat intelligence. AI primarily acts as a data analysis assistant.

- Mid-to-Late 2010s: AI begins to be explored for static and dynamic code analysis to identify potential vulnerabilities. Capabilities are limited and often require significant human oversight.

- Early 2020s: Emergence of advanced LLMs capable of understanding and generating code. Early experiments in AI-assisted vulnerability discovery.

- 2023-2024: Significant improvements in commercial and open-source LLMs lead to demonstrable capabilities in identifying zero-day vulnerabilities. Development of agentic AI frameworks for more autonomous research. Forescout’s Verde Labs publishes findings on the rapid progress in this area.

- 2025-2026 (Projected): Widespread adoption of AI for comprehensive vulnerability research across most tested models. Half of models expected to autonomously generate working exploits.

Reactions and Statements from Related Parties (Inferred)

While specific official statements from all involved parties beyond Forescout are not detailed in the provided text, the implications of such research typically elicit a range of responses from the cybersecurity community:

- AI Developers: Companies developing advanced AI models, like Anthropic and those behind Claude Opus and Kimi, would likely acknowledge the dual-use nature of their technology. They would emphasize their commitment to responsible AI development and collaborate with researchers and policymakers to mitigate potential misuse. They might also highlight their ongoing efforts to build safety guardrails into their models.

- Cybersecurity Vendors: Other cybersecurity firms would likely view this research as validation of the growing threat landscape and a call to action. They would emphasize the need for advanced detection and response solutions that can cope with AI-driven attacks and highlight their own investments in AI-powered security tools.

- Government and Regulatory Bodies: Agencies responsible for national security and cybersecurity would likely be closely monitoring these developments. They might consider new regulations, international collaborations, and increased investment in defensive cybersecurity capabilities. The potential for state-sponsored actors or sophisticated criminal groups to leverage these AI tools would be a significant concern.

- Open-Source Community: The open-source AI community would likely see this as an opportunity to contribute to both offensive and defensive capabilities. There would be a push to develop and share open-source tools and research that can help defenders counter AI-powered threats. However, the risk of these tools falling into the wrong hands would also be a significant consideration.

Broader Impact and Implications

The findings from Forescout’s Verde Labs paint a picture of a rapidly evolving cybersecurity paradigm. The democratization of sophisticated vulnerability discovery tools has profound implications:

- Increased Attack Surface: As more actors gain access to potent AI-powered tools, the likelihood of widespread vulnerability discovery and exploitation increases exponentially. Organizations that have historically relied on the obscurity of complex exploits will find this protection diminishing.

- Accelerated Threat Landscape: The speed at which AI can identify and potentially exploit vulnerabilities means that the window of opportunity for attackers will shrink, and the pace of cyberattacks could accelerate significantly. Traditional patching cycles may become insufficient.

- Shift in Defensive Strategies: Cybersecurity defenses will need to become more adaptive and proactive. This includes embracing AI for defense, implementing continuous monitoring and automated response, and focusing on building resilient systems that can withstand novel attacks. Zero-trust architectures and robust incident response plans will become even more critical.

- The Arms Race Continues: The development of AI for offensive purposes will inevitably spur further innovation in AI for defensive purposes. This creates an ongoing arms race between attackers and defenders, where both sides leverage increasingly sophisticated AI technologies.

- Ethical and Regulatory Challenges: The ease with which AI can be used to discover and exploit vulnerabilities raises significant ethical and regulatory questions. Discussions around AI governance, responsible disclosure, and the legal implications of AI-driven cyberattacks will become more urgent.

In conclusion, Forescout’s research serves as a critical wake-up call. The era of AI-driven zero-day vulnerability discovery is not a distant future; it is here, and its capabilities are rapidly expanding. The cybersecurity industry, governments, and organizations worldwide must proactively adapt to this new reality, investing in advanced defenses and fostering collaboration to navigate the complex and evolving threat landscape that AI is helping to shape. The ability of AI to find and exploit software bugs without human intervention marks a pivotal moment, demanding a comprehensive re-evaluation of how we secure our digital world.